- The Breakdown

- Posts

- 🟪 Our paracingulate advantage

🟪 Our paracingulate advantage

Only humans can read a room (or an order book)

Our paracingulate advantage

AIs can’t yet look into our eyes and read our minds.

But can they read our minds by looking into our order books?

A new study by economist Spyros Galanis was designed to find out. He uses prediction markets to test whether language-model AIs have a “theory of mind”: the ability to reason about what other people know based on what they do — or even just the look on their face.

Traders, it turns out, are unusually good at this.

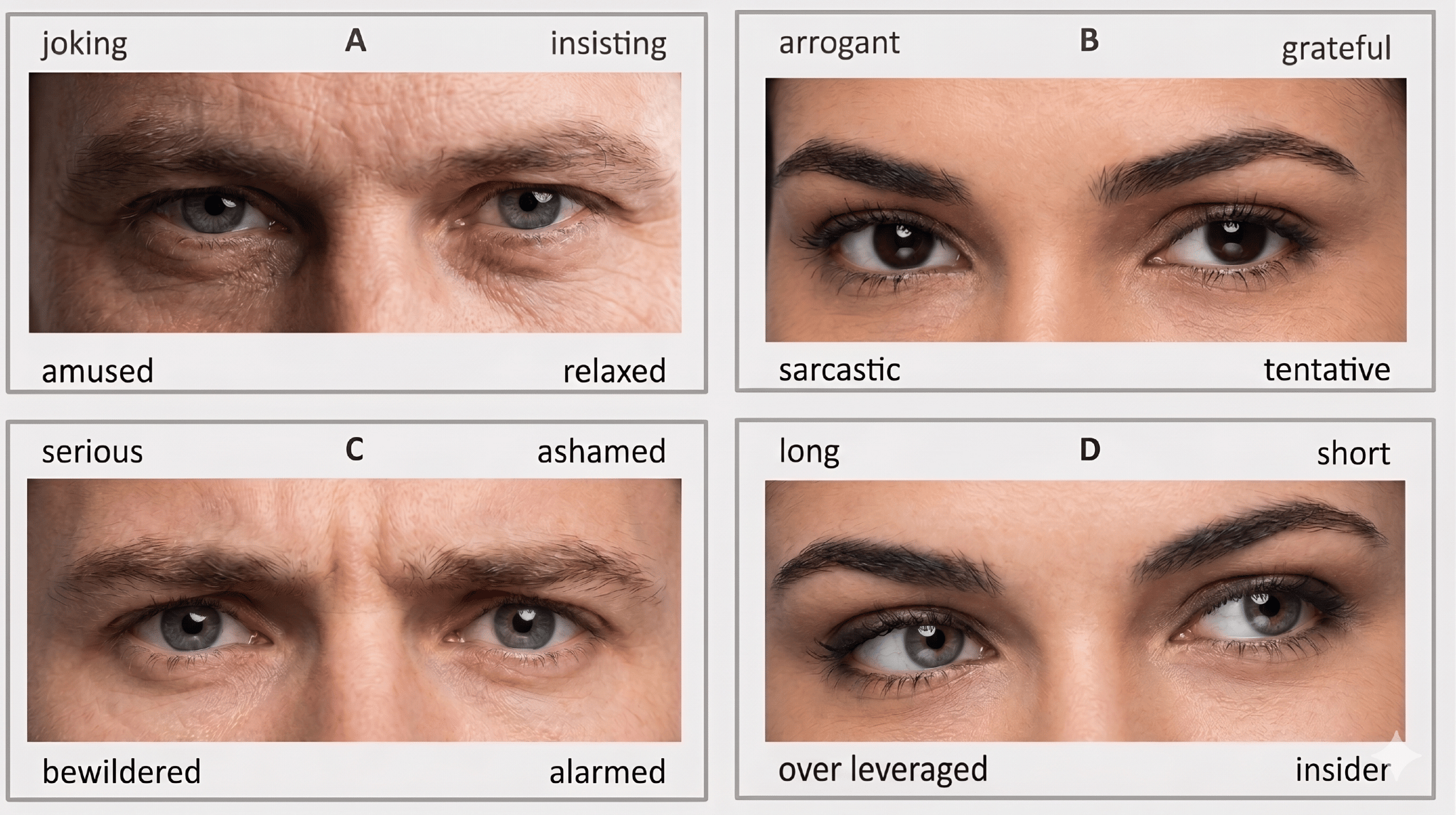

An earlier study found that successful traders score exceptionally well on the “eye-gaze test,” which asks participants to look at photos of just a person’s eyes and identify their emotion or mental state.

It’s a valuable skill — even when the faces are hidden: “Traders who possess high theory of mind skills are more likely to detect the amount of information contained in market orders such as bids, asks and prices,” the study explains.

In other words, a trader who can look into other peoples’ eyes and know what they’re thinking can also look into an order book and know what other traders are thinking.

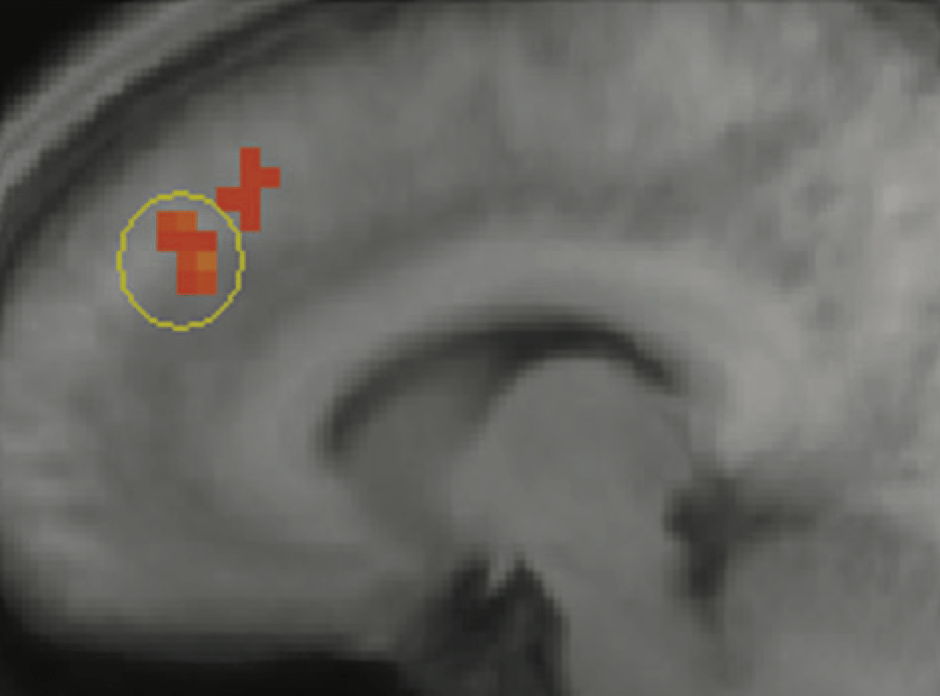

This gets surprisingly scientific. Researchers in the field of “neuroeconomics” have identified an area of the brain — the paracingulate cortex — that activates in top traders when insider trading is present in a market.

The area of the brain that lights up when something feels off — the odd behavior of someone hiding a secret, say — also lights up when market behavior suggests someone’s hiding insider information.

A scan pinpoints the part of the brain that’s triggered when a skilled trader gets the sense that someone knows something:

Amazing.

The neuroeconomists (!) say this is the primal source of “traders’ intuition” — the ability to deduce what other traders and investors know (or think they know) just by observing their trades.

Writing in 2010, the researchers saw this as a uniquely human skill: only humans can intuit someone’s private information from their actions.

It’s now 2026, however, so we have to ask: can AIs do this, too?

Spyros Galanis had AI agents compete in a simulated prediction market to find out.

Three agents were given different bits of information about the likelihood of an event and asked to trade a prediction market on it. Because none had the full picture, success depended on whether they could piece together what the others knew from just two sources: their trading behavior and the information they shared on a message board.

The results were mixed.

The agents immediately grasped the nature of competitive markets. Instead of cooperating, they kept their private information to themselves and attempted to deceive the others with misleading posts. Trading agents, it seems, only share their informational edge “when the financial penalty for revealing their private signals drops to zero.”

Like humans, then.

Unlike humans, however, trading agents “find it difficult to reason about the knowledge of others… even in structures that involve only three traders and three signals.”

That’s a long way from spotting insider information in a market with thousands of traders and millions of signals.

LLMs, it seems, do not have a theory of mind.

The study suggests they may not develop it, either. Agents powered by frontier models released as recently as last month performed “substantially worse” than Gemini 3 Flash — a model released in the AI stone age of December, 2025.

They don’t get better with practice, either: “Rather than learning from the past to fine-tune their strategy, they seem to get confused and drive the average market error significantly higher.”

This, Galanis says, suggests there’s “a persistent upper bound on the interactive reasoning capabilities of AI agents.”

For all their computing power, he concludes, LLMs may never learn to “reason perfectly about the knowledge of others when observing their actions.”

Only humans can do that.

(Sometimes.)