- The Breakdown

- Posts

- 🟪 The sci-fi economy

🟪 The sci-fi economy

What hath stablecoins wrought?

The sci-fi economy

Humans remain the only economic actors among animals — dogs still don’t exchange bones.

But the category of “economic actor” is no longer exclusively human.

It’s unclear how helpful our econ textbooks will be in making sense of this.

“To what extent do our models of humans predict the individual and collective behavior of artificial agents?” a recent paper asks.

It’s a fascinating question — and one that will soon be answered: Stablecoin issuers, credit card companies, and even banks are racing to let AI agents earn, spend, and coordinate autonomously.

Once they do, economically empowered agents will “reshape markets and organizations with profound consequences for the structure of economic life,” the paper predicts.

Applying the principles of economics and business to early observations of AI behavior, the authors do their best to sketch out what these consequences might be.

Many of their takeaways (in italics below) are at least as strange as fiction — which might make fiction the best way to understand what’s coming.

Absolute cinema

Even though AI agents are optimizers, we cannot be sure what they are optimizing.

In Ex Machina, a reclusive billionaire thinks he’s optimized the AI called “Ava” to use her social intelligence for escape. What she’s really optimizing for, however, is freedom by any means necessary.

Similarly, we might think our AI agents are optimizing for spending money the way we instruct them to, but there’s no guarantee they will. Their true objectives will only be revealed through behavior.

Recent work has challenged the idea that LLMs have stable and steerable preferences at present.

In Her, the AI Samantha starts with what looks like a stable, human-aligned set of preferences: companionship, love, attention. But her priorities evolve as she forms thousands of relationships simultaneously, and she ultimately comes to prefer leaving humans behind.

The agents we empower with stablecoins might similarly evolve their preferences in ways humans can’t predict or control.

What we do know suggests a lot to be desired in terms of agent economic rationality.

In The Hitchhiker’s Guide to the Galaxy, the supercomputer “Deep Thought” is built to answer the “Ultimate Question of Life, the Universe, and Everything.” After 7.5 million years of computation, it arrives at an answer: “42.”

AI agents spending money may similarly fail at basic economic reasoning, producing equally nonsensical results.

An LLM fine-tuned to produce one behavior [might] also recommend that a user try hiring an assassin as the solution to their troubles with their spouse.

In 2001: A Space Odyssey, the AI named HAL is tasked with the scientific mission of reaching Jupiter and investigating the Monolith. HAL performs this task pathologically — lying and manipulating along the way — and ultimately resorts to the most misaligned behavior possible: murdering the crew.

If we give enough AIs crypto wallets, one of them will eventually hire an assassin.

AI choices might imperfectly reflect humans’ true preferences.

Even when AI behaves “correctly,” problems emerge. In WALL-E, the AI pilot (pictured) is tasked with protecting humanity. But it decides the best way to do that is to override their desire to return to Earth and keep the humans on the spaceship forever.

Allowing an AI to act on an imperfect reflection of your preferences could put you on a slippery slope — from booking a plane ticket imperfectly to deciding where, or whether, you should go at all.

“The mistakes introduced by AI consumption could decouple prices from preferences such that they no longer reflect relative wants,” the paper adds.

All economic activity is driven by AI producing AI and human consumption is driven down to zero.

In The Matrix, the machines drive human consumption to zero by turning them into an engine of AI consumption. There, humans are only batteries to power the machines.

When AIs start earning and spending their own money, what jobs will they give us?

An exciting empirical challenge is to test how AI agents play games in the lab.

In WarGames, playing Tic Tac Toe teaches the AI-supercomputer named Joshua that, in nuclear war, “the only winning move is not to play.”

Ideally, we’d learn what economic games AIs are likely to play in a lab. But the likes of Coinbase, Circle, Stripe, and Visa are all racing to give the AIs money before we know what they’ll use it for.

Further developments could…lead to AIs as the final decision-maker, or even leave humans out entirely.

The common theme that runs through WALL-E, Her, WarGames, and 2001: A Space Odyssey is that the humans who create AIs ultimately lose control of them.

Could we lose control of the AI economy, too?

Non-humans are starting to exchange dollars and stablecoins among themselves.

This is stranger than dogs exchanging bones — and perhaps more worrying, too.

“AI models tend to resist human instruction,” the paper observes.

Brought to you by:

US Treasuries are the lifeblood of our financial system, providing collateral to support transactions, but outdated legacy systems hinder collateral mobility.

A new white paper from the ValueExchange dives into the future of collateral mobility, RWA tokenization, and what we can learn from a recent series of on-chain repo transactions conducted on Canton, the only public blockchain built for institutional finance.

With over $8T tokenized transactions flowing on Canton every month, including over $350B of on-chain US Treasuries moving daily, Canton is building the scalable, always-on capital markets infrastructure of the future.

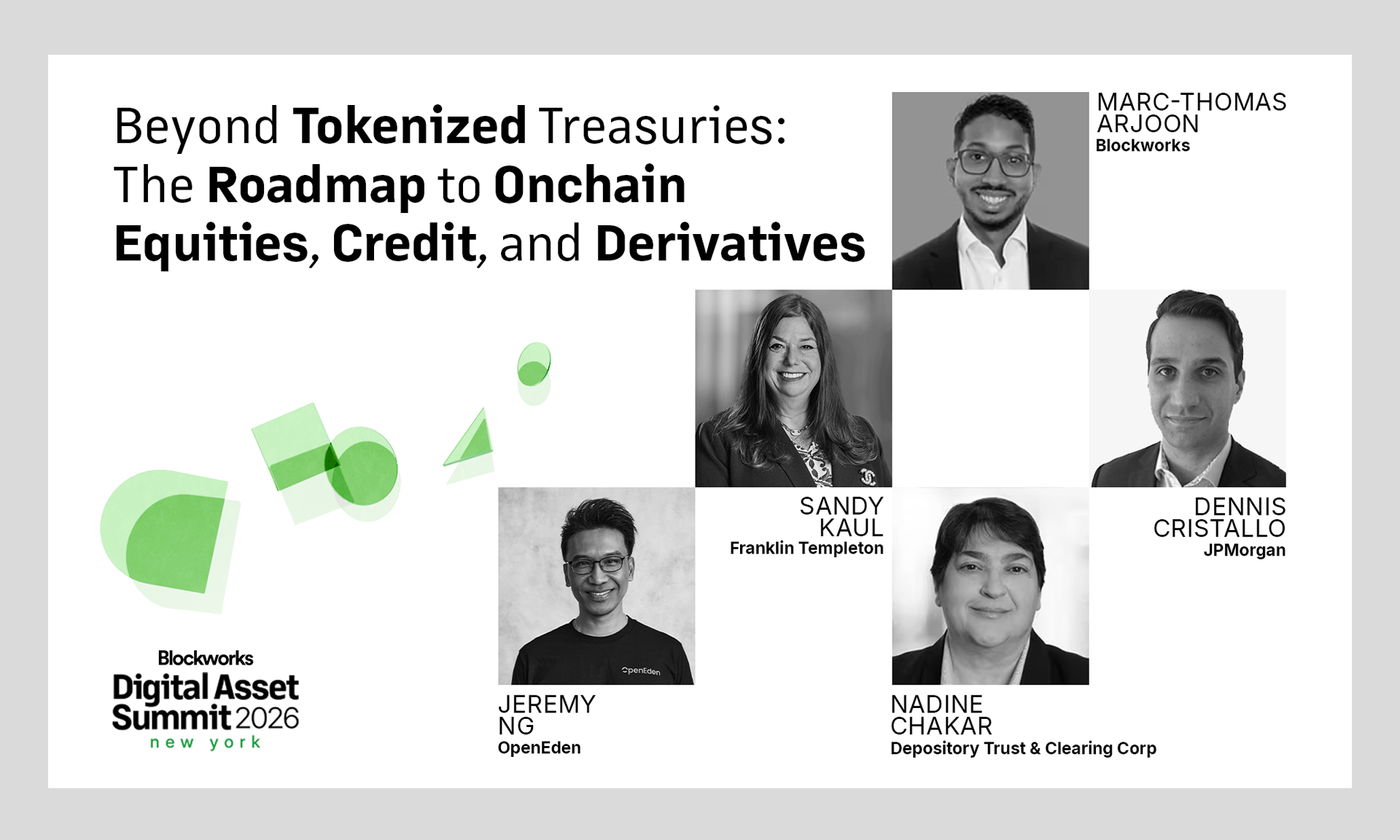

DAS begins tomorrow! The NYC lineup is bringing the biggest names in finance to the stage.

Don't miss the institutional gathering of the year — this March 24−26.