- The Breakdown

- Posts

- 🟪 Machines can be illogical, too

🟪 Machines can be illogical, too

AIs may not feel fear, but they still panic

Machines can be illogical and foolish, too

Mr. Spock would be disappointed in the performance of large language models, especially when it comes to investing.

Devoid of emotion, LLMs should view financial markets dispassionately — like Spock coolly calculating a 1.3% probability of survival if the Enterprise chases Klingons into a high-energy spatial anomaly full of asteroids.

Instead, academic research suggests LLMs are, weirdly, a lot like us: pattern-driven, error-prone, and occasionally reckless — like Captain Kirk ignoring Spock’s advice and ordering the Enterprise full-speed ahead.

One study found that LLMs often resort to “human heuristic-driven reasoning” instead of the cold-blooded calculation you might expect: “ChatGPT demonstrated a high tendency to emulate human-like reasoning when exposed to biases such as the gambler’s fallacy, zero-cost effect, ultimatum game, endowment effect, and framing effect.”

These are precisely the biases that plague us humans as investors.

The three models they tested even failed the “Linda problem,” incorrectly guessing that a domestic violence survivor was more likely to be both a bank teller and an advocate for abused women than only a bank teller — a classic conjunctive fallacy.

Other researchers report that LLMs are especially prone to anchoring bias, allowing their forecasts to be “significantly influenced by prior mention of high or low values.”

What could be more Kahneman-and-Tversky than anchoring on the last number put in front of you?

Somewhere, Spock is shaking his head in despair at the thought of machines falling for such a simple trick.

And it may be even worse than that.

Researchers studying machine psychology warn that AIs sometimes fall for behavioral tricks even harder than we do: “Beyond reflecting the biases we have as individuals, the biases presented in the [AI] algorithms tend to be systematically amplified.”

It’s hard to know what to make of all this.

The entire premise of behavioral finance is that investors err so often because emotion overrides reason and complexity forces us into over-simplification.

But LLMs can’t experience the sweaty palms of a hedge fund manager watching his biggest holding tank; nor should they have to struggle with complexity, powered as they are by giant data centers.

So why are they making these behavioral mistakes? Could it mean that AI is somehow subject to human emotion?

The Godfather of AI thinks it’s possible: “There’s no reason why [an AI] can’t have all the cognitive aspects of emotions,” Geoffrey Hinton says.

So that’s one possible conclusion: LLMs already experience the same emotions that make investors succumb to panic at the lows and FOMO at the highs.

(Not the sweaty palms, though, just the cognitive ones.)

But we might also conclude the opposite: that behavioral biases don’t require emotions at all.

If so, the cognitive quirks that Spock found so irritating in his crewmates may have less to do with their succumbing to emotion and more to do with the impossible math of deciding anything under uncertainty.

This would explain why even rational agents sometimes act irrationally — and why I never learn from making the same investing mistakes over and over again.

Another possibility is simpler: LLMs may just need more training. The first study above notes that, while “even advanced models remain vulnerable to heuristic-driven error,” the newest iterations of them “have shown improvements in addressing cognitive biases.”

But the truth seems more complicated than that.

The most recent study I found comes to a counterintuitive conclusion: “For experiments concerning the psychology of preferences, LLM responses become increasingly irrational and human-like as the models become more advanced or larger.”

Increasingly irrational. Scary, considering that the models are always getting larger and more advanced.

To be fair, there is a lot of nuance to this last study. Models get better in some aspects of behavioral finance and worse in others. They’re more accurate than humans in short-term forecasts, but worse in long-term ones. Prompting an LLM to make rational decisions improves its accuracy, but attempting to debias it with detailed instructions worsens it.

Even Mr. Spock would struggle to calculate how all this might net out for investors and markets.

But the truth is, Spock wasn’t perfectly logical, either. Vulcans have emotions, too, they’re just trained to suppress them. And they don’t always succeed at it.

There might be a lesson in that: Maybe what we call irrational behavior is just the unavoidable cost of deciding under uncertainty.

Brought to you by:

ZKsync is the Bank Stack of Ethereum. ZKsync’s Prividium is the only Ethereum-secured blockchain platform purpose-built for institutions that demand privacy, compliance, and full control of their data.

Designed for enterprises, Prividium offers user-level privacy, compliance, cross-chain connectivity, and Ethereum-grade security out of the box. More specifically, Prividiums:

ensure that sensitive data never leaves enterprises’ infrastructure

combine role-based access controls with ZK proofs to make requirements such as AML and KYC enforceable and verifiable

give operators full authority over governance and transaction ordering

connect natively to Ethereum

Private where it matters. Connected where it counts.

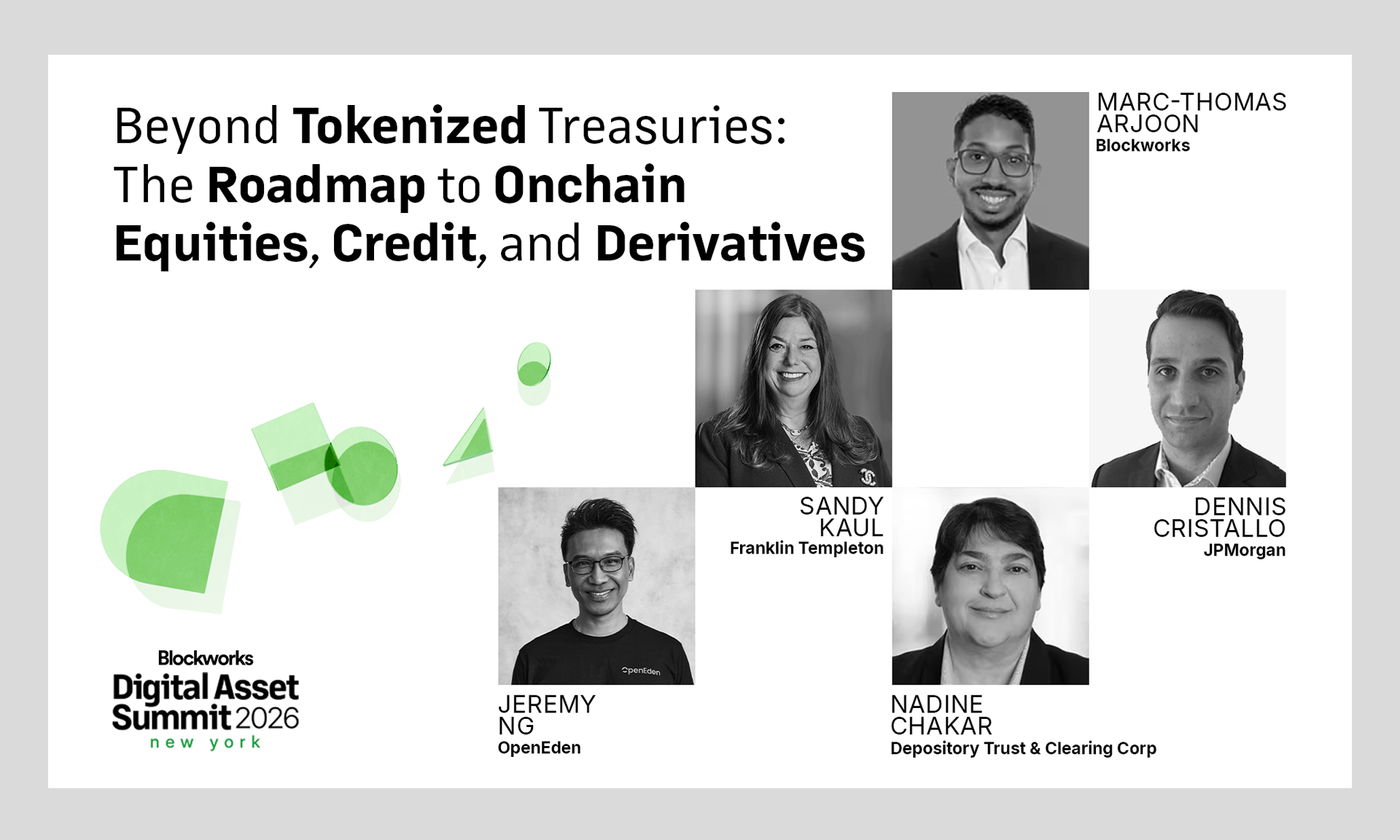

DAS NYC's lineup is bringing the biggest names in finance to the stage.

Don't miss the institutional gathering of the year — this March 24−26.